Stability AI’s Stable LM 2 1.6B Impresses in SLM Arena

Plus: Nightshade is now available in v1, AlphaCodium beats human competitors at code.

Hello Engineering Leaders and AI Enthusiasts!

Welcome to the 193rd edition of The AI Edge newsletter. This edition brings you Stability AI’s new small language model Stable LM 2 1.6B.

And a huge shoutout to our amazing readers. We appreciate you😊

In today’s edition:

🚀Stability AI introduces Stable LM 2 1.6B

🌑 Nightshade, the data poisoning tool, is now available in v1

🏆 AlphaCodium: A code generation tool that beats human competitors

📚 Knowledge Nugget: Multimodal LM roundup: Unified IO 2, inputs and outputs, Gemini, LLaVA-RLHF, and RLHF questions by

Let’s go!

Stability AI introduces Stable LM 2 1.6B

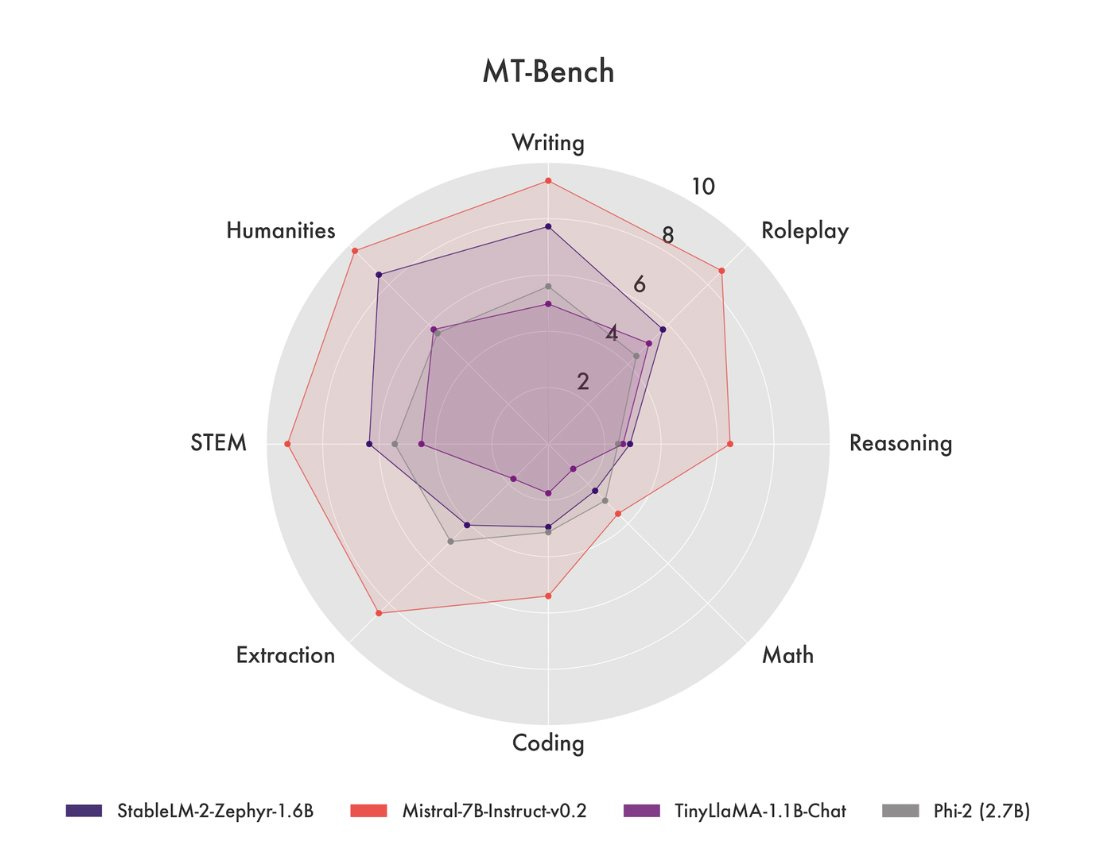

Stability AI released Stable LM 2 1.6B, a state-of-the-art 1.6 billion parameter small language model trained on multilingual data in English, Spanish, German, Italian, French, Portuguese, and Dutch. It leverages recent algorithmic advancements in language modeling to strike a favorable balance between speed and performance, enabling fast experimentation and iteration with moderate resources.

According to Stability AI, the model outperforms other small language models with under 2 billion parameters on most benchmarks, including Microsoft’s Phi-2 (2.7B), TinyLlama 1.1B, and Falcon 1B. It is even able to surpass some larger models, including Stability AI’s own earlier Stable LM 3B model.

Why does this matter?

Size certainly matters when it comes to language models as it impacts where a model can run. Thus, small language models are on the rise. And if you think about computers, televisions, or microchips, we could roughly see a similar trend; they got smaller, thinner, and better over time. Will this be the case for AI too?

Nightshade, the data poisoning tool, is now available in v1

The University of Chicago’s Glaze Project has released Nightshade v1.0, which enables artists to sabotage generative AI models that ingest their work for training.

Glaze implements invisible pixels in original images that cause the image to fool AI systems into believing false styles. For e.g., it can be used to transform a hand-drawn image into a 3D rendering.

Nightshade goes one step further: it is designed to use the manipulated pixels to damage the model by confusing it. For example, the AI model might see a car instead of a train. Fewer than 100 of these "poisoned" images could be enough to corrupt an image AI model, the developers suspect.

Why does this matter?

If these “poisoned” images are scraped into an AI training set, it can cause the resulting model to break. This could damage future iterations of image-generating AI models, such as DALL-E, Midjourney, and Stable Diffusion. AI companies are facing a slew of copyright lawsuits, and Nightshade can change the status quo.

AlphaCodium: A code generation tool that beats human competitors

AlphaCodium is a test-based, multi-stage, code-oriented iterative flow that improves the performance of LLMs on code problems. It was tested on a challenging code generation dataset called CodeContests, which includes competitive programming problems from platforms such as Codeforces. The proposed flow consistently and significantly improves results.

On the validation set, for example, GPT-4 accuracy (pass@5) increased from 19% with a single well-designed direct prompt to 44% with the AlphaCodium flow. Italso beats DeepMind's AlphaCode and their new AlphaCode2 without needing to fine-tune a model.

AlphaCodium is an open-source, available tool and works with any leading code generation model.

Why does this matter?

Code generation problems differ from common natural language problems. So many prompting techniques optimized for natural language tasks may not be optimal for code generation. AlphaCodium explores beyond traditional prompting and shifts the paradigm from prompt engineering to flow engineering.

Enjoying the daily updates?

Refer your pals to subscribe to our daily newsletter and get exclusive access to 400+ game-changing AI tools.

When you use the referral link above or the “Share” button on any post, you'll get the credit for any new subscribers. All you need to do is send the link via text or email or share it on social media with friends.

Knowledge Nugget: Multimodal LM roundup: Unified IO 2, inputs and outputs, Gemini, LLaVA-RLHF, and RLHF questions

Everyone knows that multimodal models are going to be a big theme for the year. But why?

To

, it is based on grand goals for what AI should be able to do and complement the core functions of our information processing capabilities.With society's increasing reliance on visual content from platforms like TikTok and YouTube, image inputs offer a richer set of training data. On the output side of things, the Gemini announcement impresses by natively generating images, introducing a separation between generation and information processing.

The multimodal worldview is definitely a work in progress, and so is the notation for multimodal large language models (MLLMs). We need much clearer terminology when we want to differentiate between models like OpenAI’s GPT4-V and Google’s Gemini. OpenAI’s models only read image inputs, while Google’s also outputs images. That is likely a huge difference in architecture and training methods.

This post by

roughly follows the normal training pipeline, starting with a paper about pretraining, moving through data collection, and finishing with LLaVA-RLHF and open questions.Why does this matter?

The article is a timely and insightful exploration of the evolving landscape of MLLMs. It gives a detailed examination of models such as Unified IO 2 and Gemini with a focus on their architecture and capabilities, helping understand the intricacies of these advanced systems. It also highlights the ongoing challenges and questions in the development of MLLMs.

What Else Is Happening❗

🌐WHO releases AI ethics and governance guidance for large multi-modal models.

The guidance outlines over 40 recommendations for consideration by governments, technology companies, and healthcare providers to ensure the appropriate use of LMMs to promote and protect the health of populations. (Link)

💰Sam Altman seeks to raise billions to set up a network of AI chip factories.

Altman has had conversations with several large potential investors in the hopes of raising the vast sums needed for chip fabrication plants, or fabs, as they’re known colloquially. The project would involve working with top chip manufacturers, and the network of fabs would be global in scope. (Link)

🚀Two Google DeepMind scientists are in talks to leave and form an AI startup.

The pair has been talking with investors about forming an AI startup in Paris and discussing initial financing that may exceed €200 million ($220 million)– a large sum, even for the buzzy field of AI. The company, known at the moment as Holistic, may be focused on building a new AI model. (Link)

🔍Databricks tailors an AI-powered data intelligence platform for telecoms and NSPs.

Dubbed Data Intelligence Platform for Communications, the offering combines the power of the company’s data lakehouse architecture, generative AI models from MosaicML, and partner-powered solution accelerators to give communication service providers (CSPs) a quick way to start getting the most out of their datasets and grow their business. (Link)

🤖Amazon Alexa is set to get smarter with new AI features.

Amazon plans to introduce a paid subscription tier of its voice assistant, Alexa, later this year. The paid version, expected to debut as "Alexa Plus”, would be powered by a newer model, what’s being internally referred to as “Remarkable Alexa,” which would provide users with more conversational and personalized AI technology. (Link)

New to the newsletter?

The AI Edge keeps engineering leaders & AI enthusiasts like you on the cutting edge of AI. From machine learning to ChatGPT to generative AI and large language models, we break down the latest AI developments and how you can apply them in your work.

Thanks for reading, and see you tomorrow. 😊